The race in the generative AI industry has traditionally focused on larger models for better performance. However, as power consumption and memory limitations become more prominent, the conversation is shifting towards architectural efficiency. Leading this shift is the Liquid AI team, with the introduction of the LFM2-24B-A2B model, a 24-billion parameter model that sets new standards for edge-capable AI.

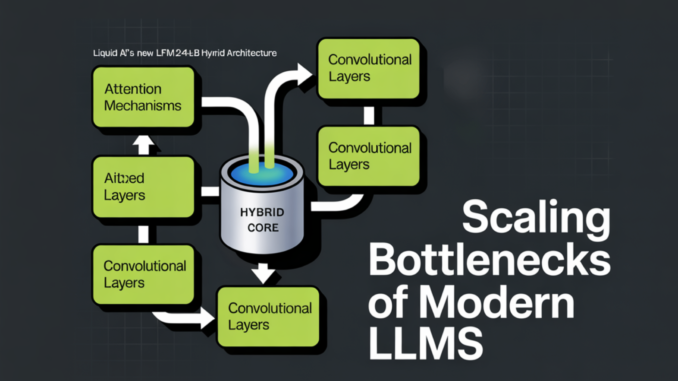

The ‘A2B’ Architecture in the model’s name refers to Attention-to-Base. Unlike traditional Transformers that use Softmax Attention in every layer, which leads to massive Key-Value caches and high VRAM usage, Liquid AI team utilizes a hybrid structure. The ‘Base’ layers consist of efficient gated short convolution blocks, while the ‘Attention’ layers incorporate Grouped Query Attention (GQA).

In the LFM2-24B-A2B model, there is a 1:3 ratio of Total Layers (40), with 30 Convolution Blocks and 10 Attention Blocks. By combining a small number of GQA blocks with a majority of gated convolution layers, the model maintains the high-resolution retrieval and reasoning of a Transformer while ensuring fast prefill and low memory usage.

A key feature of the LFM2-24B-A2B model is its Sparse Mixture of Experts (MoE) design. Despite having 24 billion parameters, only 2.3 billion parameters are activated per token. This design allows the model to run on devices with limited RAM, such as high-end consumer laptops and desktops with integrated GPUs or dedicated NPUs, without the need for data-center-grade hardware.

In terms of benchmarks, the LFM2-24B-A2B model consistently outperforms larger models in logic and reasoning tasks like GSM8K and MATH-500. It also achieves high throughput, reaching 26.8K total tokens per second on a single NVIDIA H100 using vLLM. Additionally, the model features a 32k token context window, optimized for privacy-sensitive RAG pipelines and local document analysis.

The technical cheat sheet for the LFM2-24B-A2B model includes specifications such as a total of 24 billion parameters, 2.3 billion active parameters, a hybrid architecture combining Gated Convolution and GQA, 40 layers (30 Base / 10 Attention), a context length of 32,768 tokens, and training data of 17 trillion tokens.

Key takeaways from the LFM2-24B-A2B model include its hybrid ‘A2B’ architecture, Sparse MoE efficiency, true edge capability, and state-of-the-art performance compared to larger competitors. The model is designed to be fully deployable on consumer-grade hardware, providing high efficiency and performance in long-context tasks.

For more technical details and model weights, as well as updates on AI developments, follow Liquid AI on Twitter and join their ML SubReddit and newsletter. Join their telegram group for real-time updates and discussions on AI advancements.

Be the first to comment