Generative AI’s advancement heavily relies on Latent Diffusion Models (LDMs) to manage the computational cost of high-resolution synthesis. These models compress data into a lower-dimensional latent space, enabling effective scaling. However, a key trade-off persists: lower information density in latent spaces makes learning easier but sacrifices reconstruction quality, whereas higher density allows for near-perfect reconstruction but requires greater modeling capacity.

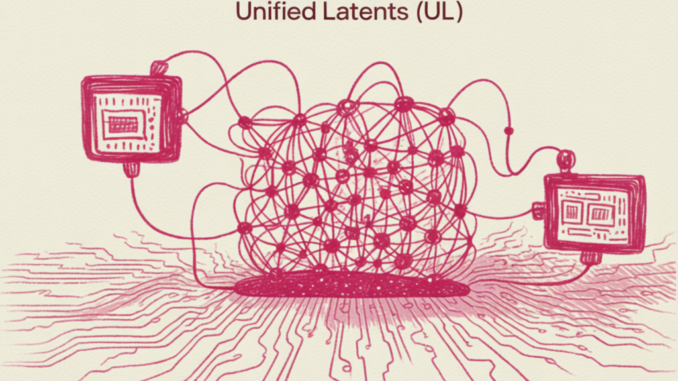

Researchers at Google DeepMind have introduced Unified Latents (UL), a framework designed to systematically address this trade-off. UL combines latent representations with a diffusion prior and decodes them using a diffusion model.

The architecture of Unified Latents is based on three key technical components:

1. Fixed Gaussian Noise Encoding: UL uses a deterministic encoder that predicts a single clean latent z and adds forward-noise to achieve a final log signal-to-noise ratio of λ(0)=5.

2. Prior-Alignment: The prior diffusion model is aligned with the minimum noise level determined by the encoder, simplifying the Kullback-Leibler (KL) term in the Evidence Lower Bound (ELBO) to a weighted Mean Squared Error (MSE) over noise levels.

3. Reweighted Decoder ELBO: The decoder employs a sigmoid-weighted loss, which sets a bound on the latent bitrate while allowing the model to prioritize different noise levels.

The Two-Stage Training Process of UL involves optimizing latent learning and generation quality:

– Stage 1: Joint Latent Learning – The encoder, diffusion prior, and diffusion decoder are trained together to learn latents that are encoded, regularized, and modeled simultaneously.

– Stage 2: Base Model Scaling – After freezing the encoder and decoder, a new ‘base model’ is trained on the latents using a sigmoid weighting to enhance performance, allowing for larger model sizes and batch sizes.

Unified Latents demonstrate high efficiency in the relationship between training compute (FLOPs) and generation quality. On ImageNet-512, UL outperformed previous approaches in terms of training cost versus generation FID. In video tasks using Kinetics-600, UL achieved a new State-of-the-Art (SOTA) for video generation.

Key Takeaways from Unified Latents:

– Integrated Diffusion Framework: UL optimizes an encoder, diffusion prior, and diffusion decoder to ensure efficient generation.

– Fixed-Noise Information Bound: The deterministic encoder adds fixed Gaussian noise, providing an interpretable upper bound on the latent bitrate.

– Two-Stage Training Strategy: Initial joint training followed by training a larger ‘base model’ on latents enhances sample quality.

– State-of-the-Art Performance: UL achieved competitive FID on ImageNet-512 and set a new SOTA FVD on Kinetics-600 with fewer training FLOPs.

For more information, refer to the original Paper and stay updated by following on Twitter and joining the ML SubReddit and Newsletter. Join the discussion on Telegram as well.

Be the first to comment