When running LLMs at scale, the real limitation is GPU memory rather than compute, mainly because each request requires a KV cache to store token-level data. In traditional setups, a large fixed memory block is reserved per request based on the maximum sequence length, which leads to significant unused space and limits concurrency. Paged Attention improves this by breaking the KV cache into smaller, flexible chunks that are allocated only when needed, similar to how virtual memory works. It also allows multiple requests with the same starting prompt to share memory and only duplicate it when their outputs start to differ. This approach greatly improves memory efficiency, allowing significantly higher throughput with very little overhead.

In this article, we simulate the naive KV cache allocator, build a working Paged Attention implementation with a block table and Copy-on-Write prefix sharing, and measure the utilisation gap across batch sizes of 10 to 200 concurrent requests.

import random

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.patches as mpatches

from collections import defaultdict

random.seed(42)

np.random.seed(42)

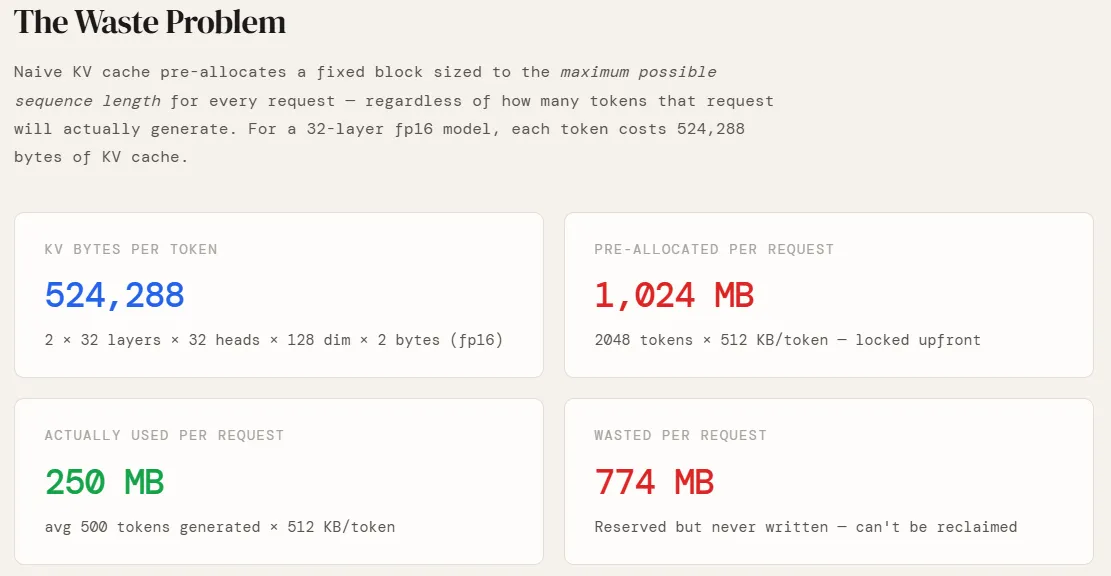

Before simulating anything, we need to know how much GPU memory a single token actually costs. This depends entirely on the model’s architecture. We use a GPT-style configuration — 32 layers, 32 attention heads, 128 dimensions per head, stored in fp16. The factor of 2 at the front accounts for both the Key and Value projections (there is no Q cache — queries are recomputed at each step). Multiplying these out gives us 524,288 bytes, or 512 KB, per token. This is the fundamental unit everything else is built on — pre-allocation sizes, page counts, and wasted memory all scale directly from this number.

NUM_HEADS = 32

HEAD_DIM = 128

BYTES_FP16 = 2

PAGE_SIZE = 16 # tokens per page (vLLM default)

MAX_SEQ_LEN = 2048

KV_BYTES_PER_TOKEN = 2 * NUM_LAYERS * NUM_HEADS * HEAD_DIM * BYTES_FP16

KV_MB_PER_TOKEN = KV_BYTES_PER_TOKEN / 1024 / 1024

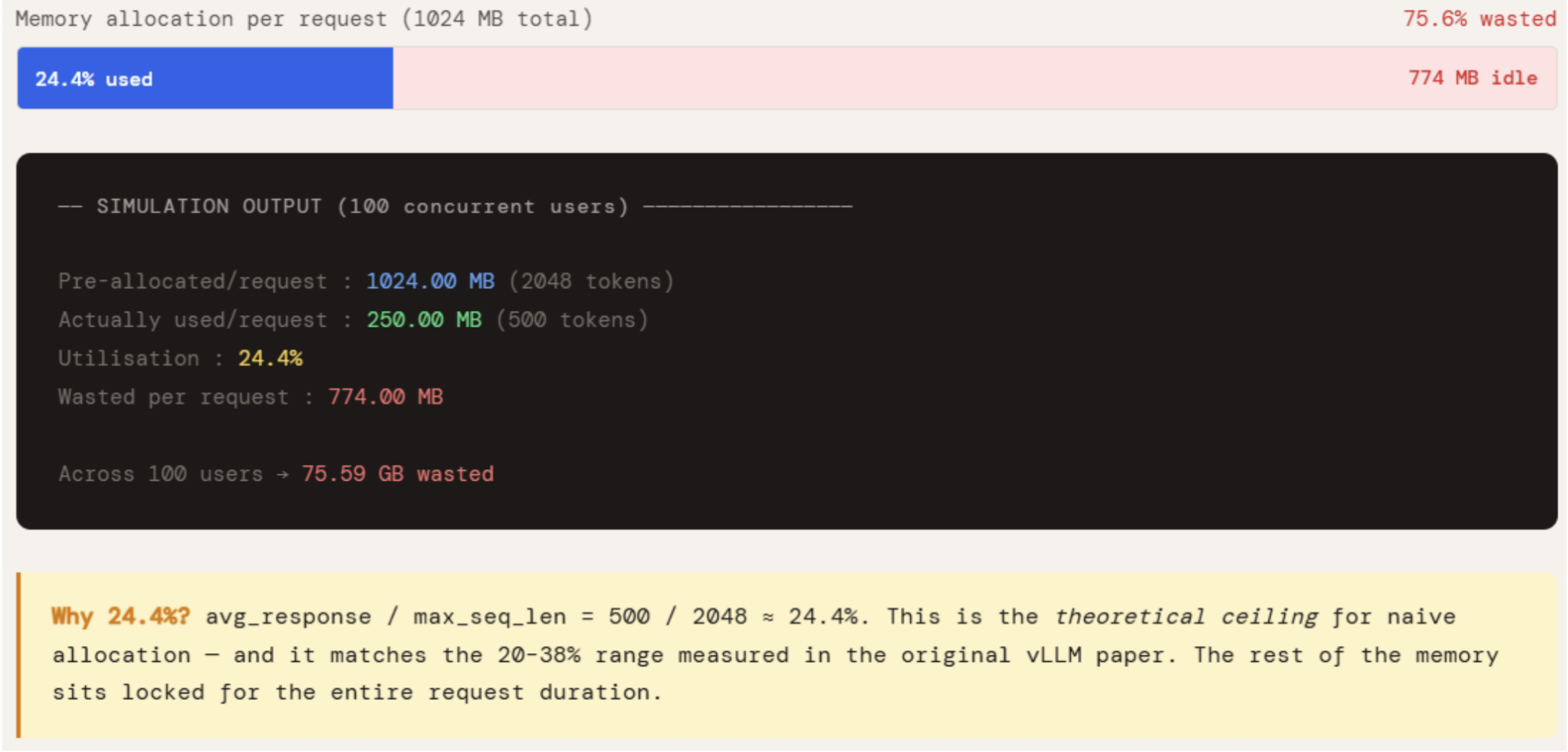

The naive approach is simple: when a request arrives, a contiguous block of GPU memory is allocated sized to the maximum sequence length — 2048 tokens in this case. This happens because the response length is unknown upfront, so the worst case is reserved.

AVG_RESPONSE is set to 500, which is a realistic average for a production chatbot. Multiplying by KV_MB_PER_TOKEN gives what is actually written versus what was locked. The gap is the waste.

The numbers make the problem concrete. Each request pre-allocates 1024 MB but uses only 250 MB — 24.4% utilisation. The remaining 774 MB sits reserved for the entire duration of the request, unavailable to any other request. Across 100 concurrent users, that is 75 GB of GPU memory doing nothing. This is not an edge case — it is the default behavior of every system that does not implement paged allocation, and it is exactly why naive serving systems hit an OOM wall long before the GPU is computationally saturated.

print(“SECTION 1 — Naive KV Cache: The Waste Problem”)

print(“=” * 60)

AVG_RESPONSE = 500 # realistic average tokens generated

pre_allocated_mb = MAX_SEQ_LEN * KV_MB_PER_TOKEN

actually_used_mb = AVG_RESPONSE * KV_MB_PER_TOKEN

print(f”\nKV cache per token : {KV_BYTES_PER_TOKEN:,} bytes”)

print(f”Pre-allocated/request : {pre_allocated_mb:.2f} MB ({MAX_SEQ_LEN} tokens)”)

print(f”Actually used/request : {actually_used_mb:.2f} MB ({AVG_RESPONSE} tokens)”)

print(f”Utilisation : {actually_used_mb / pre_allocated_mb * 100:.1f}%”)

print(f”Wasted per request : {pre_allocated_mb – actually_used_mb:.2f} MB”)

NUM_USERS = 100

wasted_gb = (pre_allocated_mb – actually_used_mb) * NUM_USERS / 1024

print(f”\nAcross {NUM_USERS} concurrent users → {wasted_gb:.2f} GB wasted”)

print(“\n→ Naive systems utilise only 20-38% of allocated KV cache memory”)

print(” (source: original Paged Attention / vLLM paper)”)

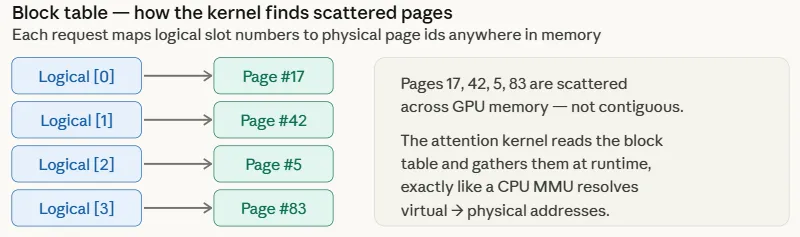

Two classes are introduced here to simulate how Paged Attention actually works at the memory management level.

PagePool represents the physical GPU memory pool — a flat array of equal-size pages, each holding 16 tokens. It maintains a free list and a ref count per page. When a page’s ref count drops to zero, it is immediately returned to the free list and becomes available to any new request.

The key difference from naive allocation is that there are no reserved holes, no fragmentation, and no memory tied to a finished request. PagedRequest represents a single inference request and holds a block_table mapping logical page indices to physical page ids in the pool. Pages are claimed from the pool as needed when generate_token() is called. This ensures that no memory is touched before it is required.

Five requests with different token counts are run, and the output shows pages allocated proportionally to actual usage. When a request is freed, its pages go back to the pool immediately and are reusable. The pool utilization may appear low due to the provisioning for small requests, but in a fully loaded production pool, it would be near 98%. The last-page waste is minimal in this scenario but typically averages around half a page per request.

In production, each request to a deployed LLM contains the same system prompt. With naive allocation, each request stores a full copy of the system prompt’s KV cache. The scenario described involves 10 concurrent requests sharing the same data in separate memory regions using a 200-token system prompt. This is achieved by utilizing a PagePool with methods such as share() and cow_copy() to manage memory allocation efficiently. By sharing the same physical pages among all requests and creating private copies only when necessary, significant memory savings are achieved.

The process involves allocating 13 pages for the system prompt, with each of the 10 user requests sharing these pages to point their block tables to the same physical memory. This results in a total of 13 physical pages being utilized instead of 130 pages that would be needed with naive allocation. The savings amount to 936 MB from a single shared prefix.

When one request generates a unique token, a new page is allocated as its private copy using the cow_copy() method, while the other requests continue to share the original page unaffected. This Copy-on-Write (CoW) approach ensures that memory is shared until divergence occurs, at which point private copies are created.

Additionally, two functions, naive_utilisation and paged_utilisation, are defined to measure utilisation under each approach across different batch sizes. Naive utilisation focuses on the ratio of actual written tokens to reserved tokens, while paged utilisation calculates the number of pages needed for each request. The results show that paged utilisation remains consistently high at around 98.5% regardless of batch size, showcasing the efficiency of the CoW approach in managing memory allocation. The difference between the two values, approximately 74 percentage points, directly allows vLLM to accommodate 2–4 times more simultaneous requests within the same GPU memory capacity.

The utilization comparison between Naive and Paged approaches is illustrated in the code snippet below. The Naive Utilization function calculates the utilization based on a normal distribution of average and standard deviation values, while the Paged Utilization function considers the actual tokens and page size for utilization calculation. The code outputs the utilization percentages for different batch sizes, showcasing the difference between the two approaches.

For further details and the complete notebook, refer to the provided link. Stay updated by following us on Twitter, joining our ML SubReddit with over 120k members, and subscribing to our Newsletter. You can also connect with us on Telegram for more updates.

Meet Arham Islam, a Civil Engineering graduate with a strong interest in Data Science, particularly in Neural Networks and their diverse applications. Transform the following:

“His actions spoke louder than words.”

into:

“His actions were more powerful than his words.” Transform the following sentence into active voice:

“The cake was baked by Mary.”

Mary baked the cake. Transform the following:

Original: I am going to the store later.

Transformed: Later, I will be going to the store.

Be the first to comment