Challenges Faced by Large Language Models in Real-World Applications

Large language models (LLMs) are encountering limitations in domains that require a deep understanding of the physical world, such as robotics, autonomous driving, and manufacturing. The inability of LLMs to grasp physical causality is driving investors towards world models. AMI Labs recently secured a $1.03 billion seed round, shortly after World Labs raised $1 billion.

While LLMs excel at processing abstract knowledge through next-token prediction, they lack grounding in physical reality. They struggle to predict the real-world consequences of actions, making them unreliable in practical applications.

Industry experts, including Turing Award recipient Richard Sutton and Google DeepMind CEO Demis Hassabis, have highlighted the limitations of LLMs. They emphasize the importance of moving AI beyond web-based tasks and into physical environments.

Vision-language models (VLMs), which are based on LLMs, exhibit brittle behavior and can break with minor input changes. This fragility stems from the models’ limited understanding of real-world dynamics.

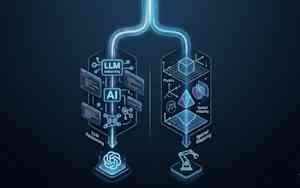

The Shift Towards World Models

Researchers are now focusing on building world models that serve as internal simulators, allowing AI systems to test hypotheses before taking physical actions. This shift aims to address the shortcomings of traditional LLM-based models.

World models encompass various architectural approaches, each with distinct trade-offs and benefits.

JEPA: Real-Time Focus

The JEPA approach emphasizes learning latent representations rather than predicting world dynamics at the pixel level. Endorsed by AMI Labs, JEPA models aim to replicate how humans perceive the world by focusing on essential interactions rather than minute details.

By prioritizing relevant information and discarding irrelevant details, JEPA models demonstrate high efficiency in computation and memory usage. This efficiency makes them suitable for real-time applications like robotics and self-driving cars.

Yann LeCun, a pioneer of the JEPA architecture, emphasizes the controllability of world models based on this approach, ensuring that they can accomplish specific goals effectively.

Gaussian Splats: Spatial Intelligence

Another approach leverages generative models to create complete 3D environments from scratch. Adopted by companies like World Labs, this method generates 3D representations using Gaussian splats, enabling interactive navigation and interaction within the environment.

This approach significantly reduces the time and cost required to create complex 3D environments, addressing the spatial intelligence limitations of traditional LLMs. It has vast potential in spatial computing, entertainment, and industrial design.

End-to-End Generation: Scalability

The third approach involves using an end-to-end generative model to continuously generate scenes, dynamics, and reactions based on user prompts and actions. Models like Genie 3 and Nvidia’s Cosmos offer a simple interface for creating interactive experiences and synthesizing large volumes of data.

While this method incurs high computational costs, it enables the creation of synthetic data for training autonomous vehicles and robotics. Waymo, an Alphabet subsidiary, utilizes this approach for training self-driving cars.

Hybrid Architectures for Enhanced Performance

Hybrid architectures that combine elements from different approaches are emerging to leverage the strengths of each model. These hybrid models, such as DeepTempo’s LogLM, offer enhanced capabilities for specific applications like cybersecurity and anomaly detection.

As world models evolve and mature, the integration of hybrid architectures will play a crucial role in enhancing AI capabilities across diverse industries.

Be the first to comment