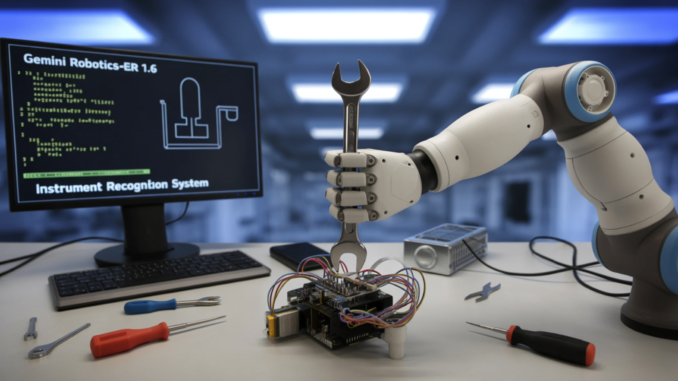

Google DeepMind Unveils Gemini Robotics-ER 1.6: The Cognitive Brain for Real-World Robots

Google DeepMind’s research team has introduced Gemini Robotics-ER 1.6, a significant upgrade to its embodied reasoning model designed to serve as the ‘cognitive brain’ of robots operating in real-world environments. This model specializes in critical reasoning capabilities for robotics, including visual and spatial understanding, task planning, and success detection. Acting as the high-level reasoning model for a robot, it can execute tasks by utilizing tools like Google Search, vision-language-action models (VLAs), or any other user-defined functions.

Understanding the Dual-Model Approach

Google DeepMind adopts a dual-model approach to robotics AI. Gemini Robotics 1.5 serves as the vision-language-action (VLA) model, processing visual inputs and user prompts to translate them into physical motor commands. On the other hand, Gemini Robotics-ER is the embodied reasoning model, specializing in understanding physical spaces, planning, and making logical decisions. While it does not directly control robotic limbs, it provides high-level insights to assist the VLA model in decision-making.

Enhancements in Gemini Robotics-ER 1.6

Gemini Robotics-ER 1.6 showcases significant improvements over its predecessors, particularly in spatial and physical reasoning capabilities such as pointing, counting, and success detection. The key addition in this version is the introduction of instrument reading, a capability absent in previous iterations.

Pointing as a Foundation for Spatial Reasoning

Pointing is a crucial aspect of the model’s ability to identify precise pixel-level locations in an image, enabling spatial reasoning, relational logic, motion trajectory mapping, grasp point identification, and constraint-based reasoning.

Success Detection and Multi-View Reasoning

Success detection is essential in robotics, allowing the agent to determine when a task is completed and decide on the next steps. Gemini Robotics-ER 1.6 advances multi-view reasoning, enabling better fusion of information from multiple camera streams in dynamic environments.

Instrument Reading: A Revolutionary Capability

Gemini Robotics-ER 1.6 introduces the groundbreaking ability of instrument reading, enabling the interpretation of analog gauges, pressure meters, and sight glasses in industrial settings. This capability is critical for facility inspection tasks and has been developed in collaboration with Boston Dynamics.

Key Takeaways

- Gemini Robotics-ER 1.6 focuses on reasoning capabilities, while the VLA model handles physical motor commands.

- Pointing is a powerful feature that enables various aspects of robotic manipulation beyond simple object detection.

- Instrument reading is a significant new capability, with Gemini Robotics-ER 1.6 achieving 93% accuracy using agentic vision.

- Success detection is crucial for enabling true autonomy in robotic systems.

For more technical details and model information, follow us on Twitter and join our ML SubReddit. Stay updated with our Newsletter and Telegram channel for the latest updates. If you’re interested in collaborating with us, reach out for promotional opportunities.

Be the first to comment